Have you ever wondered what it would be like to stand on an asteroid? A rugged terrain of boulders and craters beneath your feed, while the airless sky above you opens onto the star-spangled blackness of space.

It sounds like the opening scene for a science fiction movie. But this month, I met with students on the surface of an asteroid, all without leaving my living room.

The solution to this riddle —as you probably guessed from the title of this article— is virtual reality.

Virtual reality (or VR) allows you to enter a simulated environment. Unlike an image or even a video, VR allows you to look in all directions, move freely and interact with objects to create an immersive experience. An appropriate analogy would be to imagine yourself imported into a computer game.

It is therefore perhaps not surprise that a major application for VR has been the gaming industry. However, interest has recently grown in educational, research and training applications.

The current global pandemic has forced everyone to seek online alternatives for their classes, business meetings and social interactions. But even before this year, the need for alternatives to in-person gatherings was increasing. International conferences are expensive on both the wallet and environment, and susceptible to political friction, all of which undermine the goal of sharing ideas within a field. Meanwhile, experiences such as planetariums and museums are limited in reach to people within comfortable traveling distance.

Standard solutions have included web broadcasts of talks, or interactive meetings via platforms such as Zoom or Google hangouts. But these fail to capture the atmosphere of post-talk discussions that are as productive in a conference as the talks themselves. Similarly, you cannot talk to people individually without arranging a separate meeting.

Virtual reality offers an alternative that is closer to the experience of in-person gatherings, and where disadvantages are off-set with opportunities impossible in a regular meeting.

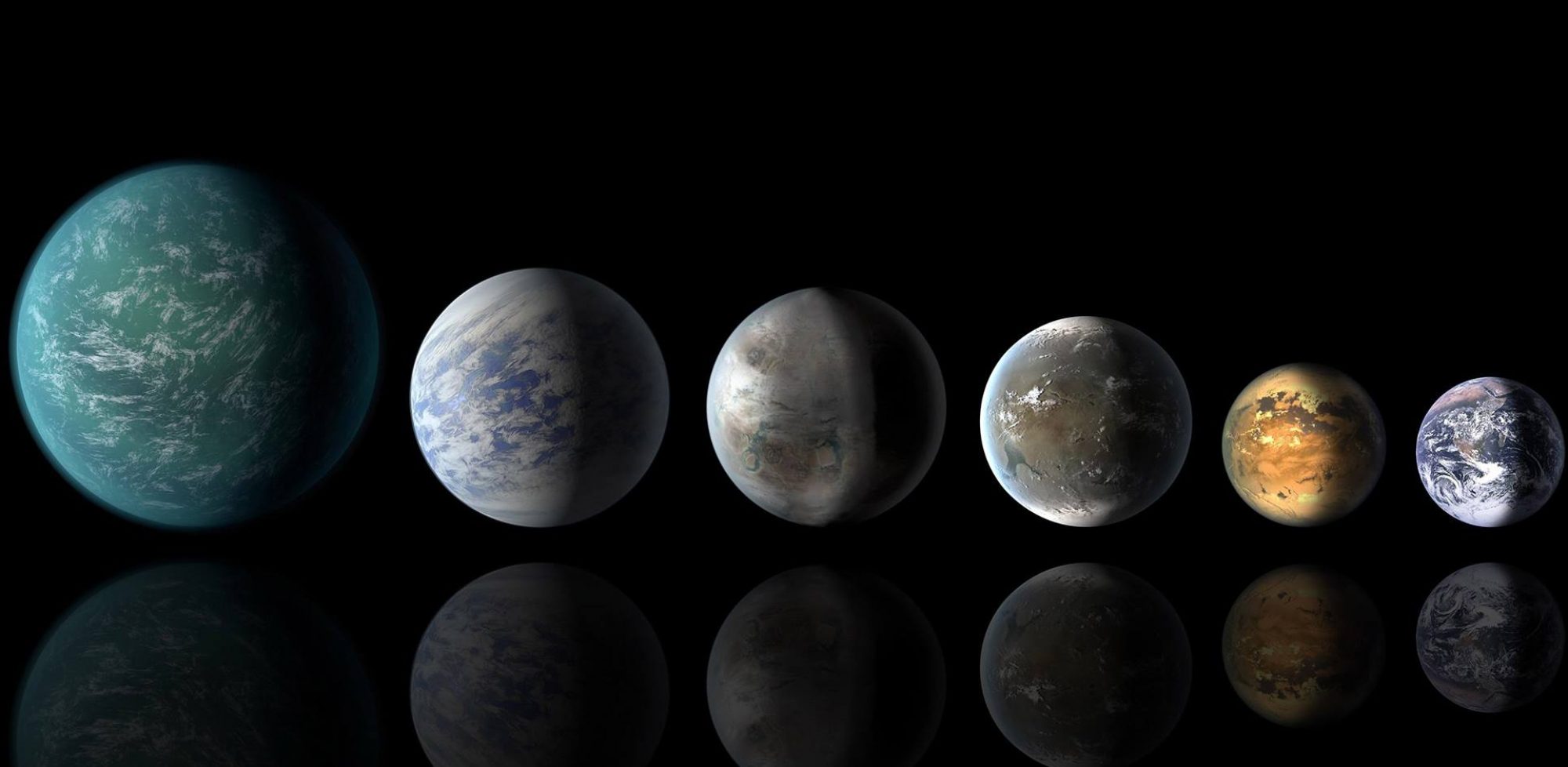

Imagine teaching a class on the solar system, where you could move your classroom from the baked surface of Mercury, to the sulphuric clouds of Venus and onto the icy moons of Jupiter. Or presenting the latest research results on organics found on Mars, while standing in the fan of the Jezero Crater delta where water once flowed over the red planet. While few humans have ventured beyond the Earth, our robotic missions have returned stunning images that can be used to create immersive virtual reality environments.

The applications are not only space-based, but can extend to any location. The origins of life could be debated beside a deep sea hydrothermal vent, or training could be given for working in high radiation areas without risking the people learning the ropes. You could even give a lecture in a traditional classroom, but have students from all over the world sitting in front of you.

The virtual world is also becoming part of scientific research. A team of scientists studying what was claimed to be the oldest fossilized remnant of Earthly life traveled to Greenland last fall for an additional analysis. They not only walked the entire area to place the finding in a broader geological context, but they brought along a team of three to create a virtual reality rendering of the outcrop where the discovery was made. The goal was to make a prototype for the broader scientific study of such distant palaeobiological (and it turns out, controversial) sites.

The organizer of the Greenland trip was Abigail Allwood, an astrobiologist and geologist at the NASA Jet Propulsion Lab and also principal investigator of an important instrument on the Mars rover scheduled to launch this summer. Allwood hopes that their virtual reality work in Greenland can serve as a model for virtual reality science on Mars.

While these sound like an exciting possibilities, what is the reality of virtual reality? In particular, how easy is it to share information within a simulated environment? To try this out, I asked students from the Yokohama International School if they would like to join me on a tour of the Hayabusa2 space mission in virtual reality.

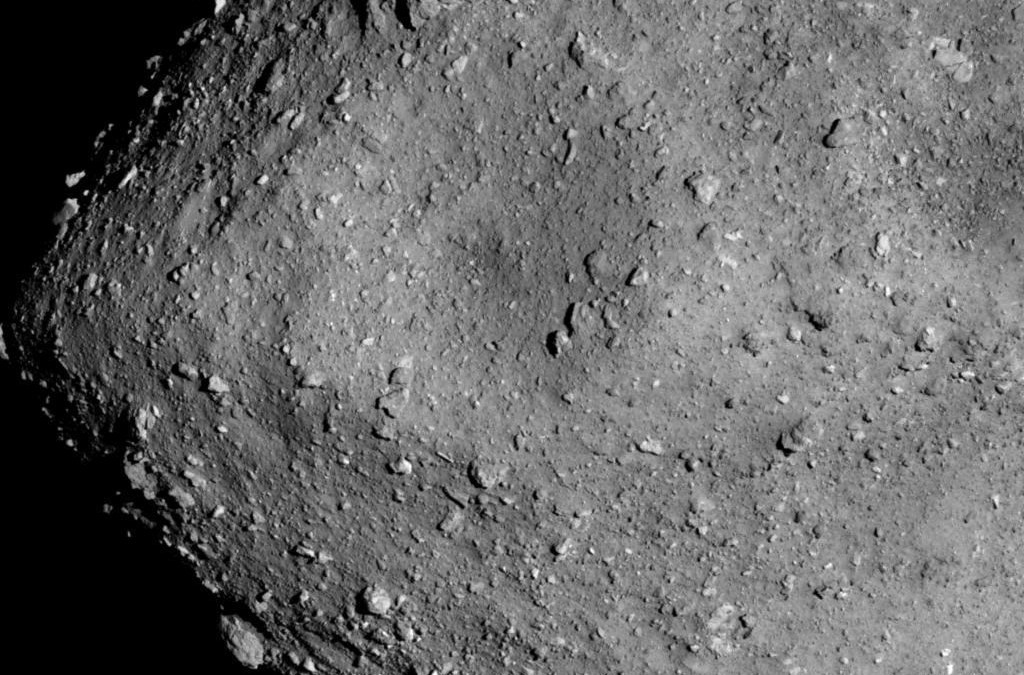

Hayabusa2 is an asteroid explorer and sample return mission led by the Japan Aerospace Exploration Agency (JAXA). In June 2018, the spacecraft arrived at asteroid Ryugu, a carbonaceous asteroid that may have been kin to the meteorites that pelted the early Earth and delivered water and organics to our planet. After approximately 18 months of remote observations and a series of landing operations that collected two samples from the asteroid surface, the spacecraft departed to return to Earth. Hayabusa2 will arrive back at Earth at the end of this year.

We began our virtual reality experience in a replica of a large, open meeting room. This was actually a simulated production of the interaction space at the Earth-Life Sciences Institute (ELSI) in Tokyo. One difference between the VR version and the real location, was that our virtual room also contained a large model of the Hayabusa2 spacecraft. Models of the two rovers and landers that explored the asteroid surface also sat on one of the tables, and a large screen played an animation of how the spacecraft collected a sample of material from the asteroid.

The virtual reality ELSI and spacecraft had been designed by OmniScope, a start-up company focused on visualizations for VR, who designed several different scenes and models for the talk. A virtual reality scene can be constructed using 3D modelling software, where accurate topology of real places can be achieved using photographs of the scene with techniques such as photogrammetry.

The most immersive way to enter the virtual reality space is by using a headset that sits over your eyes and allows you to look around the room as you would normally. However, requiring specific equipment would block people from participating, negating part of the advantage of a virtual environment. For this reasons, OmniScope recommended we use Mozilla Hubs: a free, open-source social space for virtual reality. Multiple users can meet in the same virtual area (a three-dimensional version of a chat room) and join either through a VR headset, or just using their web browser, tablet or smart phone.

While the experience is not quite as immersive if you use a web browser, you can still move in all directions, talk to people and interact with objects in the room.

It is also very easy to try. Click on this link: https://hubs.mozilla.com/

And ‘create a room’ to explore one of their virtual reality scenes, from a desert island to a wizard’s library.

Back in virtual ELSI, we began by talking about the mission. I started by using regular slides as I would in a normal classroom, as this was a familiar way of sharing information. But we swiftly moved on to examining the spacecraft, describing the instruments on Hayabusa2 by pointing to them on the model. Everyone could hold the model, turn it around and get a feel for the design. We could also duplicate copies for more than one person to examine.

We then left virtual ELSI, changing the virtual reality space into a creation of the mythological palace of Ryugu-jo, from which the asteroid takes its name.

After the launch of Hayabusa2, JAXA held a contest to name the asteroid destination. The winning entry was from the Japanese folktale of Urashima Taro. In this story, fisherman Urashima rescues a sea turtle who rewards him with a journey to the underwater palace of Ryugu-jo. Urashima meets Princess Otohime and stays with her at Ryugu-jo for three days. But when Urashima returns to his home, he discovers that centuries have passed while he was underwater. In confusion, he opens a box gifted to him by Otohime. Urashima is enveloped in fog that clears to leave him an old man, as the box contained his age. In a similar way, Hayabusa2 will bring back our planet’s age in the sample gathered from Ryugu, which may reveal how our planet evolved from a lifeless world into the one we know today.

After discussing this tale, we left the mythological Ryugu-jo for the real asteroid Ryugu. The virtual reality scene was created by OmniScope using photographs taken by the Hayabusa2 mission. Standing close to the site where the spacecraft collected a sample from the surface, we discussed the difficulty of finding a good landing site amongst the rocky terrain. We finished our tour by watching a movie from the actual touchdown.

The tour had gone well and we all enjoyed exploring the scenery, which brought the mission to life. Interacting with one another in the virtual environment felt very natural. Voices are louder when you stand closer to people, making it easy to address a group or have a quiet talk with an individual.

There are a few challenges. Without being able to see people’s true faces, it is harder to anticipate questions. This led to the students being reluctant to interrupt and occasionally we spoke over one another. Using a VR headset transmits more body language, as people can see the movement of your head and arms. However, you cannot take or use notes, as the resolution of the environment makes it difficult to read. Slides are therefore also most effective with only a few title words and pictures.

So in conclusion: is this the future? Will both science research and education move over to virtual reality and abandon the real world?

While it is unlikely we would want to replace all in-person gatherings, I feel virtual reality offers an important opportunity for sharing information with colleagues, students and friends. The environment is inspiring and the ability to join from your living room lowers barriers between sharing and meeting people that should be a goal for any plan to take us into the future. It is a true way to bring ‘many worlds’ to life.